1、初始化權重由于深度神經網絡(DNN)層數很多,每次訓練都是逐層由后至前傳遞。傳遞項<1,梯度可能變得非常小趨于0,以此來訓練網絡幾乎不會有什么變化,即vanishing gradients problem;或者>1梯度非常大,以此修正網絡會不斷震蕩,無法形成一個收斂網絡。因而DNN的訓練中可以形成很多tricks。。

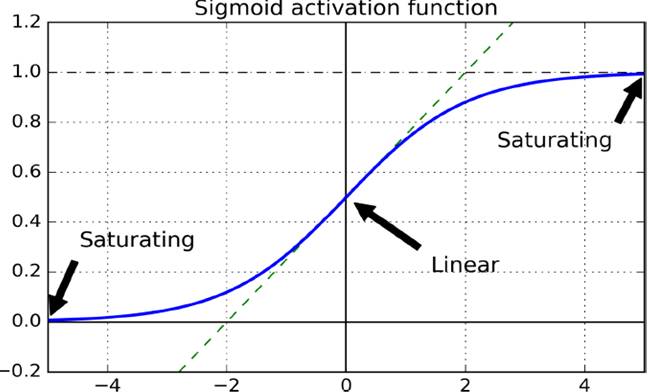

起初采用正態分布隨機化初始權重,會使得原本單位的variance逐漸變得非常大。例如下圖的sigmoid函數,靠近0點的梯度近似線性很敏感,但到了,即很強烈的輸入產生木訥的輸出。

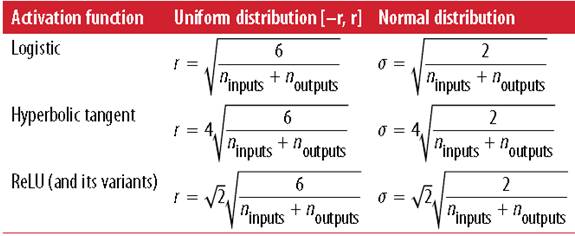

采用Xavier initialization,根據fan-in(輸入神經元個數)和fan-out(輸出神經元個數)設置權重。

并設計針對不同激活函數的初始化策略,如下圖(左邊是均態分布,右邊正態分布較為常用)

一般使用ReLU,但是不能有小于0的輸入(dying ReLUs)

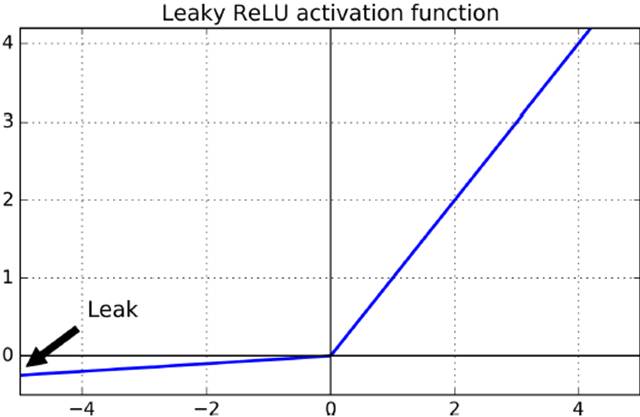

a.Leaky RELU

改進方法Leaky ReLU=max(αx,x),小于0時保留一點微小特征。

具體應用

from tensorflow.examples.tutorials.mnist import input_datamnist = input_data.read_data_sets("/tmp/data/")reset_graph()n_inputs =28*28# MNISTn_hidden1 =300n_hidden2 =100n_outputs =10X=tf.placeholder(tf.float32, shape=(None, n_inputs), name="X")y=tf.placeholder(tf.int64, shape=(None), name="y")withtf.name_scope("dnn"): hidden1 =tf.layers.dense(X, n_hidden1, activation=leaky_relu, name="hidden1") hidden2 =tf.layers.dense(hidden1, n_hidden2, activation=leaky_relu, name="hidden2") logits =tf.layers.dense(hidden2, n_outputs, name="outputs")withtf.name_scope("loss"): xentropy =tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=logits) loss =tf.reduce_mean(xentropy, name="loss")learning_rate =0.01withtf.name_scope("train"): optimizer =tf.train.GradientDescentOptimizer(learning_rate) training_op = optimizer.minimize(loss)withtf.name_scope("eval"): correct =tf.nn.in_top_k(logits,y,1) accuracy =tf.reduce_mean(tf.cast(correct,tf.float32))init =tf.global_variables_initializer()saver =tf.train.Saver()n_epochs =40batch_size =50withtf.Session()assess: init.run() forepoch inrange(n_epochs): foriteration inrange(mnist.train.num_examples // batch_size): X_batch, y_batch = mnist.train.next_batch(batch_size) sess.run(training_op, feed_dict={X: X_batch,y: y_batch}) ifepoch %5==0: acc_train = accuracy.eval(feed_dict={X: X_batch,y: y_batch}) acc_test = accuracy.eval(feed_dict={X: mnist.validation.images,y: mnist.validation.labels}) print(epoch,"Batch accuracy:", acc_train,"Validation accuracy:", acc_test) save_path = saver.save(sess,"./my_model_final.ckpt")b. ELU改進

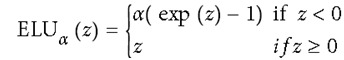

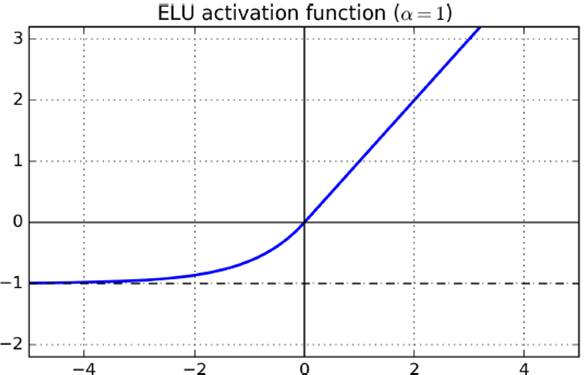

另一種改進ELU,在神經元小于0時采用指數變化

c. SELU

最新提出的是SELU(僅給出關鍵代碼)

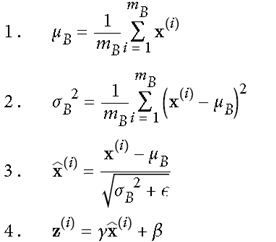

withtf.name_scope("dnn"): hidden1 =tf.layers.dense(X, n_hidden1, activation=selu, name="hidden1") hidden2 =tf.layers.dense(hidden1, n_hidden2, activation=selu, name="hidden2") logits =tf.layers.dense(hidden2, n_outputs, name="outputs")# train 過程means = mnist.train.images.mean(axis=0, keepdims=True)stds = mnist.train.images.std(axis=0, keepdims=True) +1e-10withtf.Session()assess: init.run() forepoch inrange(n_epochs): foriteration inrange(mnist.train.num_examples // batch_size): X_batch, y_batch = mnist.train.next_batch(batch_size) X_batch_scaled = (X_batch - means) / stds sess.run(training_op, feed_dict={X: X_batch_scaled,y: y_batch}) ifepoch %5==0: acc_train = accuracy.eval(feed_dict={X: X_batch_scaled,y: y_batch}) X_val_scaled = (mnist.validation.images - means) / stds acc_test = accuracy.eval(feed_dict={X: X_val_scaled,y: mnist.validation.labels}) print(epoch,"Batch accuracy:", acc_train,"Validation accuracy:", acc_test) save_path = saver.save(sess,"./my_model_final_selu.ckpt")3、Batch Normalization在2015年,有研究者提出,既然使用mini-batch進行操作,對每一批數據也可采用,在調用激活函數之前,先做一下normalization,使得輸出數據有一個較好的形狀,初始時,超參數scaling(γ)和shifting(β)進行適度縮放平移后傳遞給activation函數。步驟如下:

現今batch normalization已經被TensorFlow實現成一個單獨的層,直接調用

測試時,由于沒有mini-batch,故訓練時直接使用訓練時的mean和standard deviation(),實現代碼如下

import tensorflowastfn_inputs =28*28n_hidden1 =300n_hidden2 =100n_outputs =10batch_norm_momentum =0.9X=tf.placeholder(tf.float32, shape=(None, n_inputs), name="X")y=tf.placeholder(tf.int64, shape=(None), name="y")training =tf.placeholder_with_default(False, shape=(), name='training')withtf.name_scope("dnn"): he_init =tf.contrib.layers.variance_scaling_initializer() #相當于單獨一層 my_batch_norm_layer = partial( tf.layers.batch_normalization, training=training, momentum=batch_norm_momentum) my_dense_layer = partial( tf.layers.dense, kernel_initializer=he_init) hidden1 = my_dense_layer(X, n_hidden1, name="hidden1") bn1 =tf.nn.elu(my_batch_norm_layer(hidden1))# 激活函數使用ELU hidden2 = my_dense_layer(bn1, n_hidden2, name="hidden2") bn2 =tf.nn.elu(my_batch_norm_layer(hidden2)) logits_before_bn = my_dense_layer(bn2, n_outputs, name="outputs") logits = my_batch_norm_layer(logits_before_bn)# 輸出層也做一個batch normalizationwithtf.name_scope("loss"): xentropy =tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=logits) loss =tf.reduce_mean(xentropy, name="loss")withtf.name_scope("train"): optimizer =tf.train.GradientDescentOptimizer(learning_rate) training_op = optimizer.minimize(loss)withtf.name_scope("eval"): correct =tf.nn.in_top_k(logits,y,1) accuracy =tf.reduce_mean(tf.cast(correct,tf.float32)) init =tf.global_variables_initializer()saver =tf.train.Saver()n_epochs =20batch_size =200#需要顯示調用訓練時得出的方差均值,需要額外調用這些算子extra_update_ops =tf.get_collection(tf.GraphKeys.UPDATE_OPS)#在training和testing時不一樣withtf.Session()assess: init.run() forepoch inrange(n_epochs): foriteration inrange(mnist.train.num_examples // batch_size): X_batch, y_batch = mnist.train.next_batch(batch_size) sess.run([training_op, extra_update_ops], feed_dict={training:True,X: X_batch,y: y_batch}) accuracy_val = accuracy.eval(feed_dict={X: mnist.test.images, y: mnist.test.labels}) print(epoch,"Test accuracy:", accuracy_val) save_path = saver.save(sess,"./my_model_final.ckpt")4、Gradient Clipp處理gradient之后往后傳,一定程度上解決梯度爆炸問題。(但由于有了batch normalization,此方法用的不多)

threshold =1.0optimizer = tf.train.GradientDescentOptimizer(learning_rate)grads_and_vars = optimizer.compute_gradients(loss)capped_gvs = [(tf.clip_by_value(grad, -threshold, threshold),var) forgrad,varingrads_and_vars]training_op = optimizer.apply_gradients(capped_gvs)5、重用之前訓練過的層(Reusing Pretrained Layers)

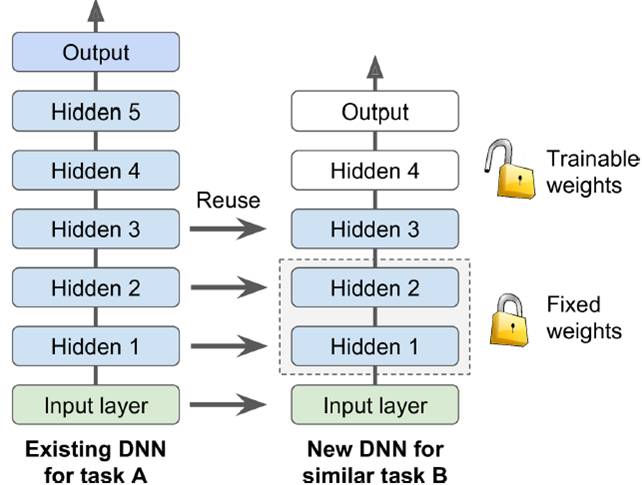

對之前訓練的模型稍加修改,節省時間,在深度模型訓練(由于有很多層)中經常使用。

一般相似問題,分類數等和問題緊密相關的output層與最后一個直接與output相關的隱層不可以直接用,仍需自己訓練。

如下圖所示,在已訓練出一個復雜net后,遷移到相對簡單的net時,hidden1和2固定不動,hidden3稍作變化,hidden4和output自己訓練。。這在沒有自己GPU情況下是非常節省時間的做法。

a. Freezing the Lower Layers

訓練時固定底層參數,達到Freezing the Lower Layers的目的

# 以MINIST為例n_inputs=28*28# MNISTn_hidden1=300# reusedn_hidden2=50# reusedn_hidden3=50# reusedn_hidden4=20# new!n_outputs=10# new!X= tf.placeholder(tf.float32, shape=(None, n_inputs), name="X")y= tf.placeholder(tf.int64, shape=(None), name="y")withtf.name_scope("dnn"): hidden1 =tf.layers.dense(X, n_hidden1, activation=tf.nn.relu, name="hidden1") # reused frozen hidden2 =tf.layers.dense(hidden1, n_hidden2, activation=tf.nn.relu, name="hidden2") # reused frozen hidden2_stop =tf.stop_gradient(hidden2) hidden3 =tf.layers.dense(hidden2_stop, n_hidden3, activation=tf.nn.relu, name="hidden3") # reused, not frozen hidden4 =tf.layers.dense(hidden3, n_hidden4, activation=tf.nn.relu, name="hidden4") # new! logits =tf.layers.dense(hidden4, n_outputs, name="outputs") # new!withtf.name_scope("loss"): xentropy =tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=logits) loss =tf.reduce_mean(xentropy, name="loss")withtf.name_scope("eval"): correct =tf.nn.in_top_k(logits,y,1) accuracy =tf.reduce_mean(tf.cast(correct,tf.float32), name="accuracy")withtf.name_scope("train"): optimizer =tf.train.GradientDescentOptimizer(learning_rate) training_op = optimizer.minimize(loss)reuse_vars =tf.get_collection(tf.GraphKeys.GLOBAL_VARIABLES, scope="hidden[123]") # regular expressionreuse_vars_dict = dict([(var.op.name, var)forvar in reuse_vars])restore_saver =tf.train.Saver(reuse_vars_dict) #torestore layers1-3init =tf.global_variables_initializer()saver =tf.train.Saver()withtf.Session()assess: init.run() restore_saver.restore(sess,"./my_model_final.ckpt") forepoch inrange(n_epochs): foriteration inrange(mnist.train.num_examples // batch_size): X_batch, y_batch = mnist.train.next_batch(batch_size) sess.run(training_op, feed_dict={X: X_batch,y: y_batch}) accuracy_val = accuracy.eval(feed_dict={X: mnist.test.images, y: mnist.test.labels}) print(epoch,"Test accuracy:", accuracy_val) save_path = saver.save(sess,"./my_new_model_final.ckpt")b. Catching the Frozen Layers

訓練時直接從lock層之后的層開始訓練,Catching the Frozen Layers

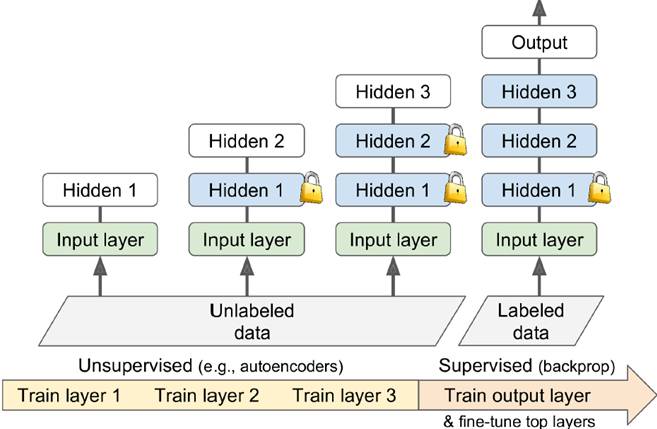

# 以MINIST為例n_inputs =28*28# MNISTn_hidden1 =300# reusedn_hidden2 =50# reusedn_hidden3 =50# reusedn_hidden4 =20# new!n_outputs =10# new!X=tf.placeholder(tf.float32, shape=(None, n_inputs), name="X")y=tf.placeholder(tf.int64, shape=(None), name="y")withtf.name_scope("dnn"): hidden1 =tf.layers.dense(X, n_hidden1, activation=tf.nn.relu, name="hidden1") # reused frozen hidden2 =tf.layers.dense(hidden1, n_hidden2, activation=tf.nn.relu, name="hidden2") # reused frozen & cached hidden2_stop =tf.stop_gradient(hidden2) hidden3 =tf.layers.dense(hidden2_stop, n_hidden3, activation=tf.nn.relu, name="hidden3") # reused, not frozen hidden4 =tf.layers.dense(hidden3, n_hidden4, activation=tf.nn.relu, name="hidden4") # new! logits =tf.layers.dense(hidden4, n_outputs, name="outputs") # new!withtf.name_scope("loss"): xentropy =tf.nn.sparse_softmax_cross_entropy_with_logits(labels=y, logits=logits) loss =tf.reduce_mean(xentropy, name="loss")withtf.name_scope("eval"): correct =tf.nn.in_top_k(logits,y,1) accuracy =tf.reduce_mean(tf.cast(correct,tf.float32), name="accuracy")withtf.name_scope("train"): optimizer =tf.train.GradientDescentOptimizer(learning_rate) training_op = optimizer.minimize(loss)reuse_vars =tf.get_collection(tf.GraphKeys.GLOBAL_VARIABLES, scope="hidden[123]") # regular expressionreuse_vars_dict = dict([(var.op.name, var)forvar in reuse_vars])restore_saver =tf.train.Saver(reuse_vars_dict) #torestore layers1-3init =tf.global_variables_initializer()saver =tf.train.Saver()importnumpyasnpn_batches = mnist.train.num_examples // batch_sizewithtf.Session()assess: init.run() restore_saver.restore(sess,"./my_model_final.ckpt") h2_cache = sess.run(hidden2, feed_dict={X: mnist.train.images}) h2_cache_test = sess.run(hidden2, feed_dict={X: mnist.test.images})# not shown in the book forepochinrange(n_epochs): shuffled_idx = np.random.permutation(mnist.train.num_examples) hidden2_batches = np.array_split(h2_cache[shuffled_idx], n_batches) y_batches = np.array_split(mnist.train.labels[shuffled_idx], n_batches) forhidden2_batch, y_batchinzip(hidden2_batches, y_batches): sess.run(training_op, feed_dict={hidden2:hidden2_batch, y:y_batch}) accuracy_val = accuracy.eval(feed_dict={hidden2: h2_cache_test,# not shown y: mnist.test.labels}) # not shown print(epoch,"Test accuracy:", accuracy_val) # not shown save_path = saver.save(sess,"./my_new_model_final.ckpt")6、Unsupervised Pretraining該方法的提出,讓人們對深度學習網絡的訓練有了一個新的認識,可以利用不那么昂貴的未標注數據,訓練數據時沒有標注的數據先做一個Pretraining訓練出一個差不多的網絡,再使用帶label的數據做正式的訓練進行反向傳遞,增進深度模型可用性

也可以在相似模型中做pretraining

7、Faster Optimizers在傳統的SGD上提出改進

有Momentum optimization(最早提出,利用慣性沖量),Nesterov Accelerated Gradient,AdaGrad(adaptive gradient每層下降不一樣),RMSProp,Adam optimization(結合adagrad和momentum,用的最多,是缺省的optimizer)

a. momentum optimization

記住之前算出的gradient方向,作為慣性加到當前梯度上。相當于下山時,SGD是靜止的之判斷當前最陡的是哪里,而momentum相當于在跑的過程中不斷修正方向,顯然更加有效。

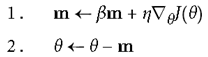

b. Nesterov Accelerated Gradient

只計算當前這點的梯度,超前一步,再往前跑一點計算會更準一些。

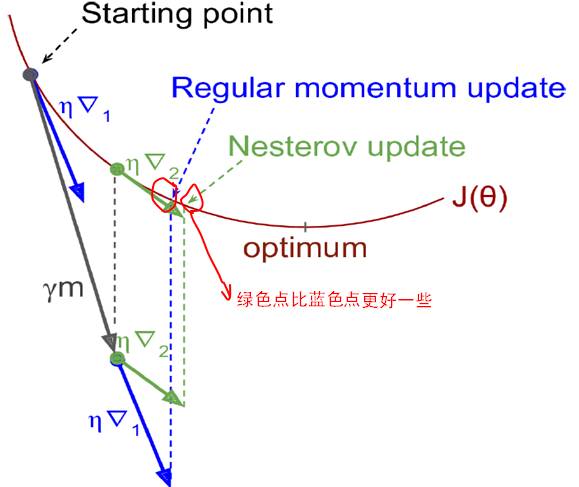

c. AdaGrad

各個維度計算梯度作為分母,加到當前梯度上,不同維度梯度下降不同。如下圖所示,橫軸比縱軸平緩很多,傳統gradient僅僅單純沿法線方向移動,而AdaGrad平緩的θ1走的慢點,陡的θ2走的快點,效果較好。

但也有一定缺陷,s不斷積累,分母越來越大,可能導致最后走不動。

d. RMSProp(Adadelta)

只加一部分,加一個衰減系數只選取相關的最近幾步相關系數

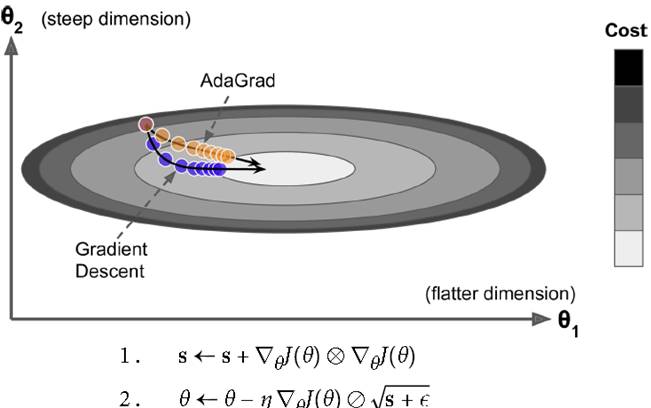

e. Adam Optimization

目前用的最多效果最好的方法,結合AdaGrad和Momentum的優點

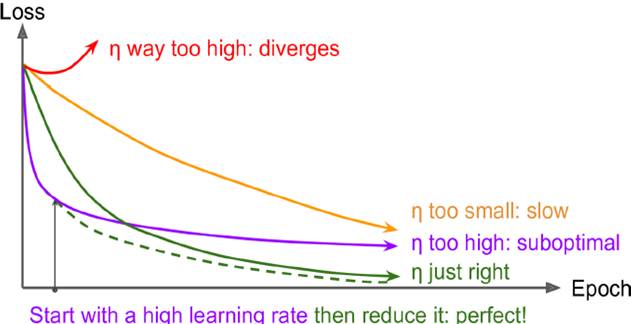

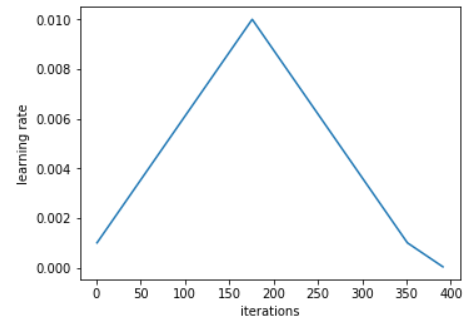

learning rate的設置也很重要,如下圖所示,太大不會收斂到全局最優,太小收斂效果最差。最理想情況是都一定情況縮小learning rate,先大后小

a. Exponential Scheduling

指數級下降學習率

initial_learning_rate=0.1decay_steps=10000decay_rate=1/10global_step= tf.Variable(0, trainable=False)learning_rate= tf.train.exponential_decay(initial_learning_rate, global_step, decay_steps, decay_rate)optimizer= tf.train.MomentumOptimizer(learning_rate, momentum=0.9)training_op= optimizer.minimize(loss, global_step=global_step)9、Avoiding Overfitting Through Regularization解決深度模型過擬合問題

a. Early Stopping

訓練集上錯誤率開始上升時停止

b. l1和l2正則化

# construct the neural networkbase_loss =tf.reduce_mean(xentropy, name="avg_xentropy")reg_losses =tf.reduce_sum(tf.abs(weights1)) +tf.reduce_sum(tf.abs(weights2))loss =tf.add(base_loss, scale * reg_losses, name="loss")with arg_scope( [fully_connected], weights_regularizer=tf.contrib.layers.l1_regularizer(scale=0.01)): hidden1 = fully_connected(X, n_hidden1, scope="hidden1") hidden2 = fully_connected(hidden1, n_hidden2, scope="hidden2") logits = fully_connected(hidden2, n_outputs, activation_fn=None,scope="out")reg_losses =tf.get_collection(tf.GraphKeys.REGULARIZATION_LOSSES)loss =tf.add_n([base_loss] + reg_losses, name="loss")c. dropout

一種新的正則化方法,隨機生成一個概率,大于某個閾值就扔掉,隨機扔掉一些神經元節點,結果表明dropout很能解決過擬合問題。可強迫現有神經元不會集中太多特征,降低網絡復雜度,魯棒性增強。

加入dropout后,training和test的準確率會很接近,一定程度解決overfit問題

training =tf.placeholder_with_default(False, shape=(), name='training')dropout_rate =0.5# ==1- keep_probX_drop =tf.layers.dropout(X, dropout_rate, training=training)withtf.name_scope("dnn"): hidden1 =tf.layers.dense(X_drop, n_hidden1, activation=tf.nn.relu, name="hidden1") hidden1_drop =tf.layers.dropout(hidden1, dropout_rate, training=training) hidden2 =tf.layers.dense(hidden1_drop, n_hidden2, activation=tf.nn.relu, name="hidden2") hidden2_drop =tf.layers.dropout(hidden2, dropout_rate, training=training) logits =tf.layers.dense(hidden2_drop, n_outputs, name="outputs")d. Max-Norm Regularization

可以把超出threshold的權重截取掉,一定程度上讓網絡更加穩定

defmax_norm_regularizer(threshold, axes=1, name="max_norm", collection="max_norm"): defmax_norm(weights): clipped = tf.clip_by_norm(weights, clip_norm=threshold, axes=axes) clip_weights = tf.assign(weights, clipped, name=name) tf.add_to_collection(collection, clip_weights) returnNone# there is no regularization loss term returnmax_normmax_norm_reg = max_norm_regularizer(threshold=1.0)hidden1 = fully_connected(X, n_hidden1, scope="hidden1", weights_regularizer=max_norm_reg)e. Date Augmentation

深度學習網絡是一個數據饑渴模型,需要很多的數據。擴大數據集,例如圖片左右鏡像翻轉,隨機截取,傾斜隨機角度,變換敏感度,改變色調等方法,擴大數據量,減少overfit可能性

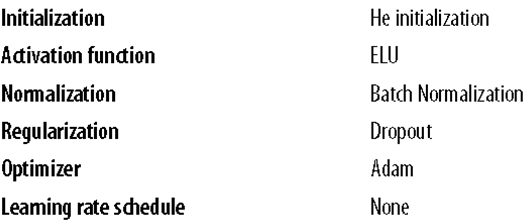

10、default DNN configuration

-

深度神經網絡

+關注

關注

0文章

61瀏覽量

4569

原文標題:【機器學習】DNN訓練中的問題與方法

文章出處:【微信號:AI_shequ,微信公眾號:人工智能愛好者社區】歡迎添加關注!文章轉載請注明出處。

發布評論請先 登錄

相關推薦

電子焊接的常見問題及解決方法

gitee 常見問題及解決方法

mac的常見問題解決方法

三坐標測量機常見故障及解決方法

接地網阻值偏大的原因及解決方法

常見的DC電源模塊故障及解決方法

詳解DNN訓練中出現的問題與解決方法方法

詳解DNN訓練中出現的問題與解決方法方法

評論